Students are already using Artificial Intelligence, so what should libraries do about it? Drawing on research and observation at the University of Malta Library, this intern-led article investigates how students actually use AI, and whether library services are keeping pace. It ultimately reframes the conversation: not what libraries can build, but what users truly need.

Continue readingUnveiling Attachment Styles with AI

MindOnly has developed an Artificial Intelligence tool that can assess your attachment style. This Malta-based research project, supported by Xjenza, has created a platform that reads non-verbal cues to better understand users. This may prove to be a useful personal development tool and, crucially, a valuable resource for mental health practitioners.

Continue readingSmarter Swimming: How AI and Wearables Are Redefining Performance in the Pool

When a curious mother and seasoned AI researcher dipped her toes into the world of competitive swimming, she did not expect to launch one of the most comprehensive sports-tech initiatives Malta has ever seen. Now, through two intertwined projects – DIVE and SWIM-360 – Dr Vanessa Camilleri and her team at the University of Malta are capturing the full stroke of what makes swimmers fast, efficient, and injury-free.

Continue readingADACE3 – Revolutionising Bookkeeping with AI

Filling in an FS3 form (Statement of Earnings/Tax Return forms) is already a headache, but imagine trying to prepare accounts for a small business. It is a time-consuming and laborious process, and doing things by hand can lead to serious mistakes. Why haven’t we automated accounting yet?

Continue readingAI Meets the Maltese Courts: Can AI Simplify Small Claims Proceedings?

The idea behind the AMPS project is to investigate the use of natural language processing and machine learning to predict the outcome of Maltese court cases, specifically those within the Small Claims Tribunal, which deals with claims of up to €5000. The objective is to provide a tool to help adjudicators decide cases more efficiently while respecting and integrating the ethical and safety concerns which inevitably arise. By aligning with best practices and guidelines, the project team intends not only to develop a tool for the courts but also to advance the use of the Maltese language in relation to Artificial Intelligence (AI).

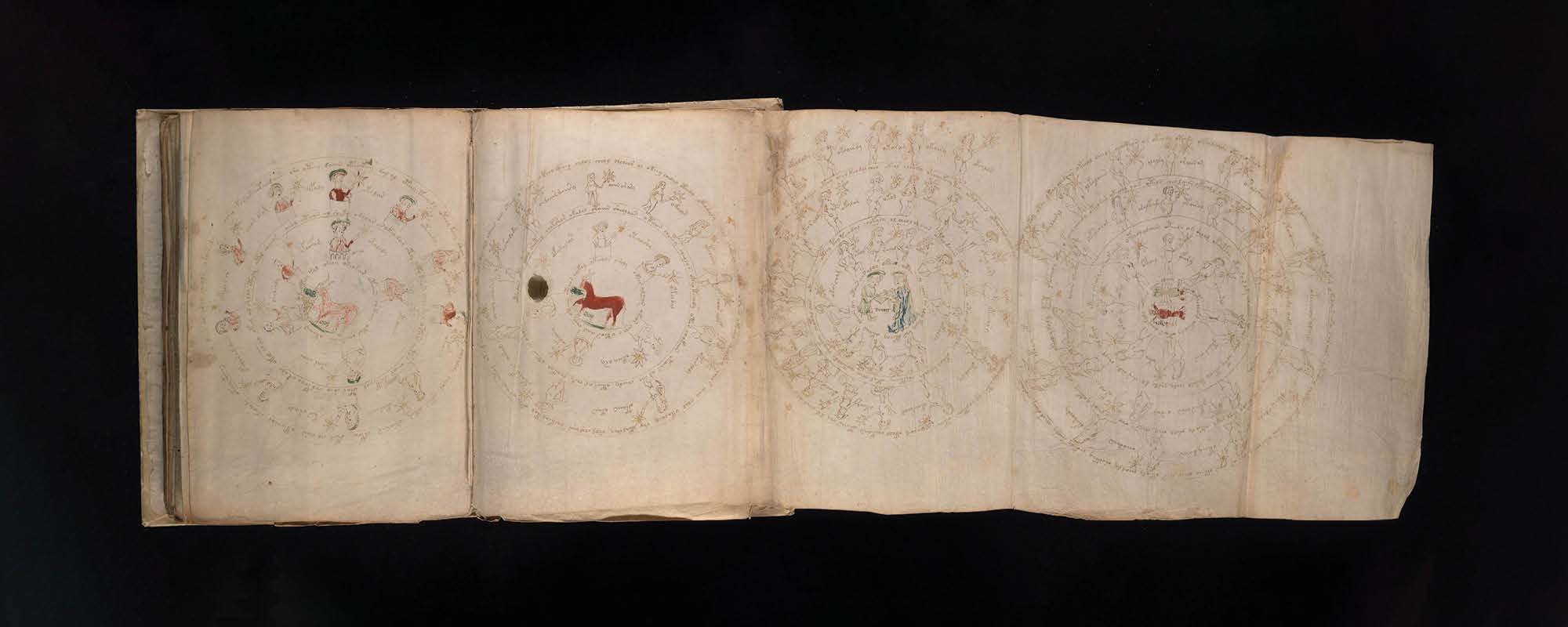

Continue readingThe Voynich Manuscript

The Voynich Manuscript is one of the most enduring historical enigmas, attracting multidisciplinary interest from around the world. Jonathan Firbank speaks with UM’s wing of the Voynich Research Group about the history, mystery, and cutting-edge technology brought together by this unique medieval text.

Continue readingTweeting About the News

Nicholas Mamo has developed an algorithm that analyses Twitter feeds and extracts information about events. This type of artificial intelligence has been designed to automatically identify the participants involved in the events and understand what happened based on the tweets.

Continue readingSmartAP: Assisting Pilots with AI

As passengers, we often overlook the complexity and challenges faced by pilots as they navigate the skies. THINK explores SmartAP, a cutting-edge AI technology that could help pilots combat stalling and difficult landing situations. Buckle up, sit back, and enjoy the journey!

Continue readingModl.ai: Creating the Ultimate AI Game Testers

A spin-out from the University of Malta’s Institute of Digital Games is working on artificial intelligence-run game testing software. The engine would run thousands of low-level testing rounds before humans engage in high-level testing of a game prior to market release. Modl.ai co-founder Georgios N. Yannakakis tells THINK how his team aspires to change the game.

Continue readingIs ChatGPT an Aid or a Cheat?

From the perspective of a student and an academic, how can ChatGPT be used in academia? Nathanael Schembri explores how the AI could be used to aid in assessments as well as scholarly work; such as in writing dissertations and research papers.

Continue reading