By Ryan Abela

In Artificial Intelligence, neural networks have always fascinated me. Based on biological concepts similar to human brains, artificial neural networks consist of very simple mathematical functions connected to each other through a set of variable parameters. These networks can solve problems that range from mathematical equations to more abstract concepts such as detecting objects in a photo or recognising someone’s voice.

Artificial neural networks normally need some training. Say we need a neural network that can detect whether there is an apple in a photo. We could feed in thousands of different pictures of apples, and fine-tune the parameters of the artificial neural network until it starts classifying these photos correctly.

Google and Facebook use some of these techniques for their photo applications. A couple of months ago, Google released an app that can find your photos of specific objects by using words, like ‘dog’ or ‘house’. To do this, Google came up with an artificial neural network that was trained with images of dogs, animals, and so on. But here comes the fun part. Later that month some Google software engineers wrote an article about how to analyse and visualise what’s going on inside the neural network.

Neural networks have been used for decades and are backed by strong mathematical proofs. Yet what is going on within the neural network is very hard to visualise because a classification model is essentially represented by thousands of variables (connections) which appear to be quite random. In their experiment, Google’s software engineers inverted the artificial neural network by feeding it an image of random noise to see what patterns it would detect. They took the experiment one step further by using the detected pattern on a different image. The picture was passed through the neural network a number of times to see which pattern would emerge.

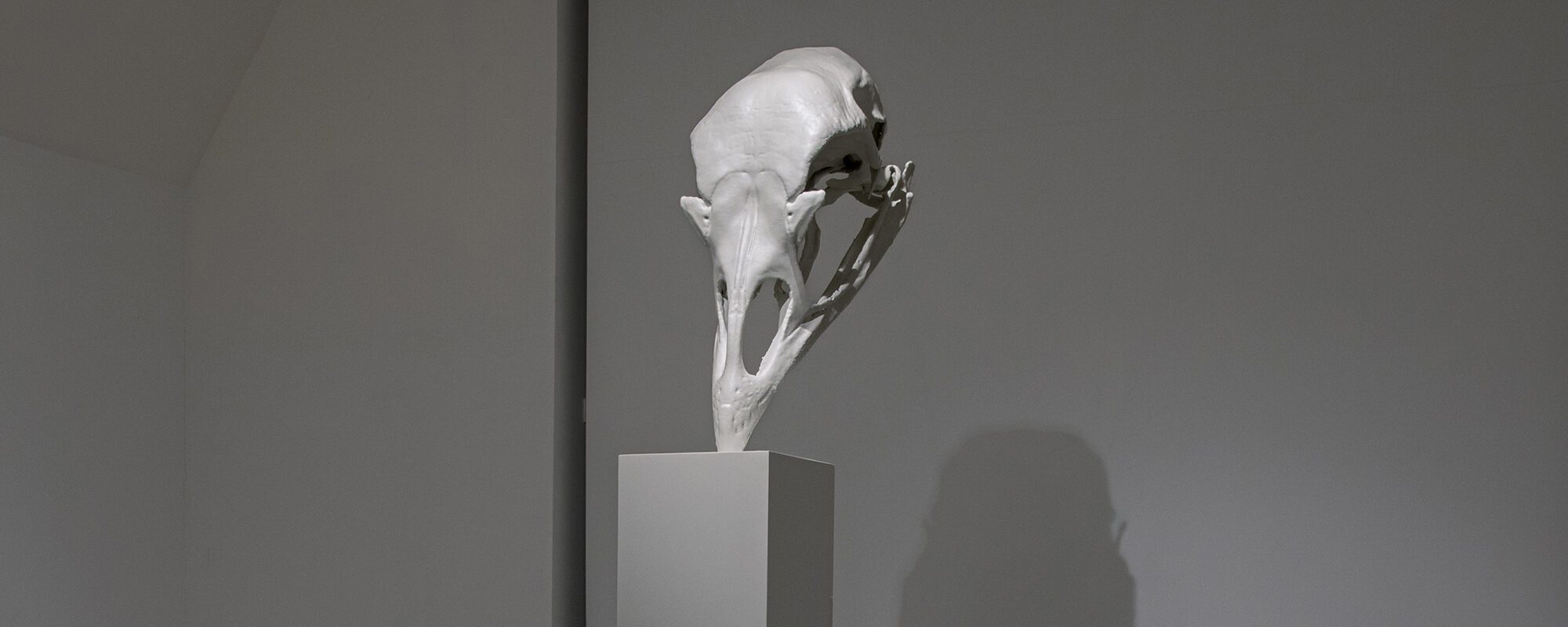

The results of these experiments amazed the whole world. Photos passed through Google’s artificial neural network produced hallucinogenic, surrealist imagery with many dogfaces, eyes, and buildings emerging from the photo. Google named it Deep Dream and now anyone can Deep Dream their photo and turn it into a dreamscape. Dalì: eat your heart out.

Deep Dream your own photo on: http://deepdreamgenerator.com or apps like http://dreamify.io Think magazine interns got carried away and Deep Dreamed all our cover artwork. Find them on Twitter #ThinkDream or Facebook http://bit.ly/ThinkDream

Comments are closed for this article!